Notes for lectures January 2006 at city of Westminster college.

Simple Harmonic Motion

When I was learning physics, the lecturer used to say, ‘there’s nothing simple about simple harmonic motion!’

From the maths point of view, he’s probably right, but in the natural world, SHM is the basis of all movement and sound.

When the wind blows a stalk of corn, the stem bends, and then because it has some weight of its own, it bends a little more; further than the wind would blow it. Then it springs back; again, beyond where it would have rested. That all seems simple, because it’s so natural, we all see the effect every day in everything we do.

The easiest model to demonstrate and understand SHM is a spring and a weight…….

When the weight is pulled down below its resting place, and then released, it will spring back to its original resting place and then go on beyond it, setting up an oscillation. The oscillation gradually dies down because of air resistance and a resistance to deformation in the spring.

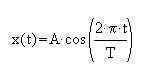

Its position x as a function of time t is: where A is the amplitude of motion : the distance from the centre of motion to either extreme. T is the period of motion: the time for one complete cycle of the motion.

If you could attach a pencil to the weight, and pull a piece of paper across the weight as it was bouncing, the line you get is a sine wave.

All the physics courses treat SHM like this; for the purposes of the maths, the assumptions are that the elasticity of the spring is perfect, and that there is no air resistance; or that the oscillations will die down because the air resistance and the modulus of elasticity are constant functions…… but that’s not quite true, and this is where SHM has its relevance in the world of understanding quality audio.

During the oscillation of the spring and the weight, when the spring is elongating, the resistance to deformation of the spring increases slightly as the spring becomes longer, then, as the weight moves upwards, that resistance to deforming in the metal becomes less.

What I’m really saying is that while the weight seems to follow that perfect mathematical format, in reality it deviates slightly.

The same thing happens to a greater or lesser extent with all things that oscillate; and in the natural world these deviations are remarkably similar.

When something oscillates in air, within the range of 10 oscillations per second up to many thousands of oscillations per second, then of course we can hear it…. It’s sound.

So, going back to Simple Harmonic Motion, the so called ‘perfect’ form of oscillation or sound is an object moving, causing pressure changes in the air that follow the ‘simple’ maths of SHM. We call that a sine wave, and looked at from the other direction, as a sound engineer, the sine wave is a pure sound, that is, a sound that has a single frequency and nothing else.

But all musical sounds have overtones as we used to call them; harmonics that are related to the basic or ‘fundamental’ tone… now all that stuff about SHM should start to fall into place; when that weight we were talking about fails to follow the exact path predicted by the SHM maths, it actually creates an ‘overtone’, or more usually, lots of overtones. Thinking of it as a wave once again, that ‘distortion’ of the wave not following the SHM path creates ‘harmonics’; you were expecting that! But did you realise that the harmonics created are called ‘even order harmonics’ and that they are all musically related to the fundamental frequency?

So, when a wave is slightly distorted in one direction, that is, where the lower half of the wave is not exactly the same as the upper half, then the ‘overtones’ created are called ‘even order’, they are musically related to the fundamental frequency, and they sound pleasant to the ear.

Interestingly, this is the commonest form of ‘distortion’ in nature, starting with the human voice, which is, after all, just breath being blown past the oscillating vocal cords…. The breath is moving one way and so the wave-form of the sound produced is not quite symmetrical, it has overtones or harmonics, and so it sounds interesting and pleasant.

On the other hand, since we have been able to create pure waves, and amplify sound , and particularly since we have been converting that sound into digits, there is another type of ‘overtone’ that has become more and more common; this is ‘odd-order’ distortion and it’s caused when a waveform is altered from pure form symmetrically top and bottom, which can easily be achieved by say, overdriving an amplifier, or trying to push too much into an A to D converter. This kind of distortion is, of course, entirely unnatural and so human ears are just not used to it! It sounds harsh and unpleasant, and, just to make matters worse; the human ear is very sensitive to it.

To put things into perspective, if we amplify a piano say, and the amplifier is not particularly good, then it’s possible that we could add a 2nd harmonic distortion onto the sound of the piano of say 0.2% which means that the waveform we have recorded has deviated from the wave that we put in by 1 part in 500; which doesn’t sound very much, but it’s clearly audible at that sort of level.

However, if the distortion created in the amplifier was ‘odd order’, that is, 3rd, 5th, 7th, etc. then the amplifier would have to be a whole lot better for it still to sound good… in fact, it would need to have a distortion performance of better than 0.001% to get away with it, and that represents an error of only one part in 100,000.

Amplifier Classes and Overloads

So where is all this leading? It heads towards an appreciation of a number of those terms we glibly use when talking and writing about ‘quality’ and ‘distortion’.

‘Harmonics’ are everywhere; they are a part of all the sounds we hear.

But the harmonics that occur in nature, and more specifically in music are predominantly asymmetric, one sided, 2nd order, leaning one way like grass, or like the little hairs in your inner ear that react to the sounds that we hear.

Back in the days when all amplifiers were ‘valve’, the amplification was done by making small voltage changes to a valve electrode (grid), and that caused a much larger change to an electric current flowing between two other electrodes (the cathode and anode); a sort of ‘tap’ effect, which was why it was called a ‘valve’.

The accuracy of the amplification was quite reasonable; the distortions or non-linearity produced was in the order of 1 part per 1000, about 0.1%

And because the amplifier was modifying a current flow in one direction, (a bit like the vocal cords really!) the type of distortion produced was mainly 2nd order, so it was pleasant to the ear.

This type of amplifier is called ‘class-A’.

An interesting and really useful attribute of this type of amplifier is that as you push more audio into it, the output keeps on going up, but of course, the distortion keeps on increasing, but the point at which it finally ‘gives up’ is a long way higher than the normal operating level.

Then came transistors and a much more refined design philosophy of feedback amplifiers. Distortions could be reduced to unheard of(!) levels, but why did they sound so awful?

The answer to that question is far from simple, but there are a few clues that explain a lot……

The first factor is the type of distortion; transistor (and IC) amplifiers are designed by engineers who like symmetrical things, they like tidiness and will go to great lengths to achieve the exact specification requirements; but will often (always?) miss related factors that are not in the spec.

The result is that an amplifier, or piece of equipment that has the best-looking spec. paradoxically, will usually sound the worst…. But it’s not really a paradox, it’s just the way specs have evolved…. Super low noise performance inevitably means poor overload margin; poor overload margin means transient distortion, and if the amplifier is a modern IC device, that distortion will be predominantly 3rd order, and will sound quite disgusting.

Older amplifiers, circuits and complete equipment such as tape recorders had technical specifications that look pathetic today, and yet they often sounded really good….. I always quote the spec of my first ‘professional’ tape recorder; it was actually a present, Joe Meek gave it to me. It was an EMI TR51A. A mono full track ¼ inch machine. The frequency response spec was ‘100Hz to 8KHz’! and I don’t think they even mentioned distortion, yet many records in the early 60s were mastered on that very machine.

Compression and Limits

As we have seen, technology and natural hearing don’t mix too well… we have to go to extreme lengths so that the kinds of distortion that occur in our reproduced sound are the right sort!Another aspect of sound where the natural world and technology collide is in dynamic range.

Prior to the invention of the electronic amplifier, recording had been a matter of getting as much acoustic information as possible onto the recording medium.

At this point it’s worth a quick definition… I’m sure that you know this very well, but just in case, and to avoid confusions; the exact difference between limiters and compressors.

A limiter is a device which allows audio signals through unmodified up to a certain threshold, beyond which the amplifier gain is reduced so that the level never exceeds that threshold.

A compressor is an amplifier whose gain reduces as the input level increases.

The purpose of a limiter is to eliminate momentary overloads, the purpose of a compressor is to reduce dynamic range.

In the real world there is a very great deal of overlap between the two…. While limiters are used in the mastering process to avoid overloads, they are also often added to the path in speech or vocal recording where the engineer thinks of them as a way to control transients, although in practice, when used like that, they work more like a compressor.

Compressors are used at all stages of the recording process…. by many engineers, myself included. Unless you are recording classical music, where any compression would be (should be) spotted and condemned…. although I must admit that I did use the tiniest spot of compression on the violin microphone when I recorded this piece; played by Miriam Kramer.

(Excerpt of Miriam Kramer recording.)

The first commercial compressors weren’t called compressors at all, they were called ‘levelling amplifiers’ and it’s obvious why…. These were variable gain amplifiers that automatically controlled the level of dialogue signals in film sound-track recording.

Record producers and audio engineers very quickly adopted them for use in sound recording studios; of course, very many records in the late 20s and 1930s were made in the film studios of the time.

A popular type of compressor developed in the late 1930s, was the one that eventually turned into the highly commercially successful Universal Audio LA2, and this is where the definitions start to get somewhat mixed…. The LA2 works on the principle of having some device that reduces the audio signal by shorting it out when the level gets too high, which, strictly speaking, describes a limiter rather than a compressor.

The LA2 is a valve amplifier, but connected from its grid to the grid bias point (actually ‘ground’.) is a photo sensitive resistor made of cadmium sulphide. When the audio signal gets above a threshold, a voltage is produced in a ‘sidechain’, that activates a photo-luminescent panel that is mounted against the cadmium sulphide cell. This makes the resistance of the cell reduce, which in turn effectively alters the gain of the amplifier.

Much later, in the 1970s when LEDs became readily available, the opening was clearly there for an improved version to be designed, but it was not until 1992 that any serious development took place…. That was the time when I needed a compressor for some recordings I was doing; it was actually the soundtrack for a travelogue video. I was trying to do the whole thing on a shoestring budget, and certainly could not afford to buy any of the compressors on the market. So, I remembered the way we used to make compressors in the 60s, and applied a little bit of ‘servo amplifier’ thinking to the problem; the result was the original Joemeek compressor.

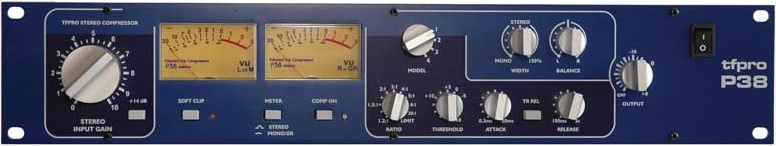

But time moves on, and in 2003, with a new brand and less exotic colour, I developed a much more versatile stereo compressor for mastering, combining the requirements for limiting and compression, and with the introduction of some tricks with imaging…

The P38 processes the stereo audio signal into ‘sum and difference’, that is, it adds the left and right signals to get ‘sum’, and electronically subtracts right from left to get ‘difference’ (sometimes called ‘M and S’ or ‘middle and side’).

The compression and limiting is done in that processed state, the signals are then re-converted back into ‘left and right’. The reason for all that complication is because when we listen to any sound source, our ears are very sensitive to shifts in the sound, left and right. Trying to compress two linked channels with optical compressors is never very accurate, and there are always small errors in the compression depth, causing left/right shifts in the image. This is completely eliminated when using ‘sum and difference’; any errors show up as tiny changes in ‘width’ and this is not recognisable by the ear.

(compression demo)

©Ted Fletcher 2005